How to Cache GraphQL Requests Using Kong and StepZen

Roy Derks

Roy DerksGraphQL is often used as an API gateway for microservices, but can also play nicely with existing solutions for API gateways. For example using Kong, an API gateway and microservice management platform. As an API Gateway, Kong has many capabilities that you can use to enhance GraphQL APIs. One of those capabilities is adding a proxy cache to the upstream services you add to Kong. But when it comes to caching with GraphQL, there are several caveats you must consider due to the nature of GraphQL. In this post, we will go over the challenges of caching a GraphQL API and how an API gateway can help with this.

Why Use API Caching?

Caching is an important part of any API — you want to ensure that your users get the best response time on their requests as possible. Of course, caching is not a one-size-fits-all solution, and there are a lot of different strategies for different scenarios.

Suppose your API is a high-traffic, critical application. It's going to get hit hard by many users, and you want to make sure it can scale with demand. Caching can help you do that by reducing the load on your servers and improving all users' performance while potentially reducing costs and providing additional security features. Caching with Kong is often applied at the proxy layer, meaning that this proxy will cache requests and serve the response of the last call to an endpoint if this exists in the cache.

GraphQL APIs have their challenges in caching that you need to be aware of before implementing a caching strategy, as you'll learn in the next section.

Challenges of Caching with GraphQL

GraphQL has been getting increasingly popular amongst companies. GraphQL is best described as a query language for APIs. It's declarative, which means you write what you want your data to look like and leave the details of that request up to the server. This is good for us because it makes our code more readable and maintainable by separating concerns into client-side and server-side implementations.

GraphQL APIs can solve challenges like over fetching and under fetching (also called the N+1 problem). But as with any API, a poorly designed GraphQL API can result in some horrendous N+1 issues. Users have control over the response of a GraphQL request by modifying the query they send to the GraphQL API, and they can even make the data nested on multiple levels. While there are certainly ways to alleviate this problem on the backend via the design of the resolvers or caching strategies, what if there was a way to make your GraphQL API blazing fast without changing a single line of code?

StepZen adds caching to queries and mutations that rely on

@rest, see here for more information.

By using Kong and GraphQL Proxy Cache Advanced plugin, you totally can! Let's take a look.

Set up caching for GraphQL with Kong

When you're using Kong as an API gateway, it can be used to proxy requests to your upstream services, including GraphQL APIs. With the enterprise plugin GraphQL Proxy Caching Advanced, you can add proxy caching to any GraphQL API. With this plugin, you can enable proxy caching on the GraphQL service, route, or even globally. Let's try this out by adding a GraphQL service to Kong created with StepZen.

With StepZen, you can create GraphQL APIs declaratively by using the CLI or writing GraphQL SDL (Scheme Definition Language). It's a language-agnostic way to create GraphQL as it relies completely on the GraphQL SDL. The only thing you need to get started is an existing data source, for example, a (No)SQL database or a REST API. You can then generate a schema and resolvers for this data source using the CLI or write the connections using GraphQL SDL. The GraphQL schema can then be deployed to the cloud directly from your terminal, with built-in authentication.

Create a GraphQL API using StepZen

To get a GraphQL using StepZen, you can connect your data source or use one of the pre-built examples from Github. In this post, we'll be taking a GraphQL API created on top off a MySQL database from the examples. By cloning this repository to your local machine, you'll get a set of configuration files and .graphql files containing the GraphQL schema. To run the GraphQL API and deploy it to the cloud, you need to be using the StepZen CLI.

You can install the CLI from npm:

npm i -g stepzen

After installing the CLI, you can sign-up for a StepZen account to deploy the GraphQL API on a private, secured endpoint. Optionally, you can continue without signing up but bear in mind that the deployed GraphQL endpoint will be public. To start and deploy the GraphQL API, you can run:

stepzen start

Running this command will return a deployed endpoint in your terminal (like https://public3b47822a17c9dda6.stepzen.net/api/with-mysql/__graphql). Also, you can now find the deployed GraphQL API in your StepZen dashboard.

Add a GraphQL Service to Kong

The service you've created in the previous section can now be added to your Kong gateway by using Kong Manager or the Admin API. Let's use the Admin API to add our newly created GraphQL API to Kong, which only requires us to send a few cURL requests. First, we need to add the service, and then a route for this service needs to be added.

You can run the following command to add the GraphQL service and route. Depending on the setup of your Kong gateway, you might need to add authentication headers to your request:

curl -i -X POST \

--url http://localhost:8001/services/ \

--data 'name=graphql-service' \

--data 'url=https://public3b47822a17c9dda6.stepzen.net/api/with-mysql/__graphql'

curl -i -X POST \

--url http://localhost:8001/services/graphql-service/routes \

--data 'hosts[]=stepzen.net' \

The GraphQL API has now been added as an upstream service to the Kong gateway, meaning you can already proxy to it. But not before we'll be adding the GraphQL Proxy Caching Advanced plugin to it, which can also be done using a cURL command:

curl -i -X POST \

--url http://localhost:8001/services/graphql-service/plugins/ \

--data 'name=graphql-proxy-cache-advanced' \

--data 'config.strategy=memory'

Using Kong as a proxy for the GraphQL API with the addition of this plugin, all requests are cached automatically at the query level. The default TTL (Time To Live) of the cache is 300 seconds unless you overwrite it (for example 600 seconds) by adding --data' config.cache_ttl=600. In the next section, we'll explore the cache by querying the proxied GraphQL endpoint.

Querying a GraphQL API

The proxied GraphQL API will be available through Kong on your gateway endpoint. When you send a request to it, it will expect to receive a header value with the hostname of the GraphQL. As it is a request to a GraphQL API, keep in mind that all requests should be POST requests with the Content-Type: application/json header and a GraphQL query appended in the body.

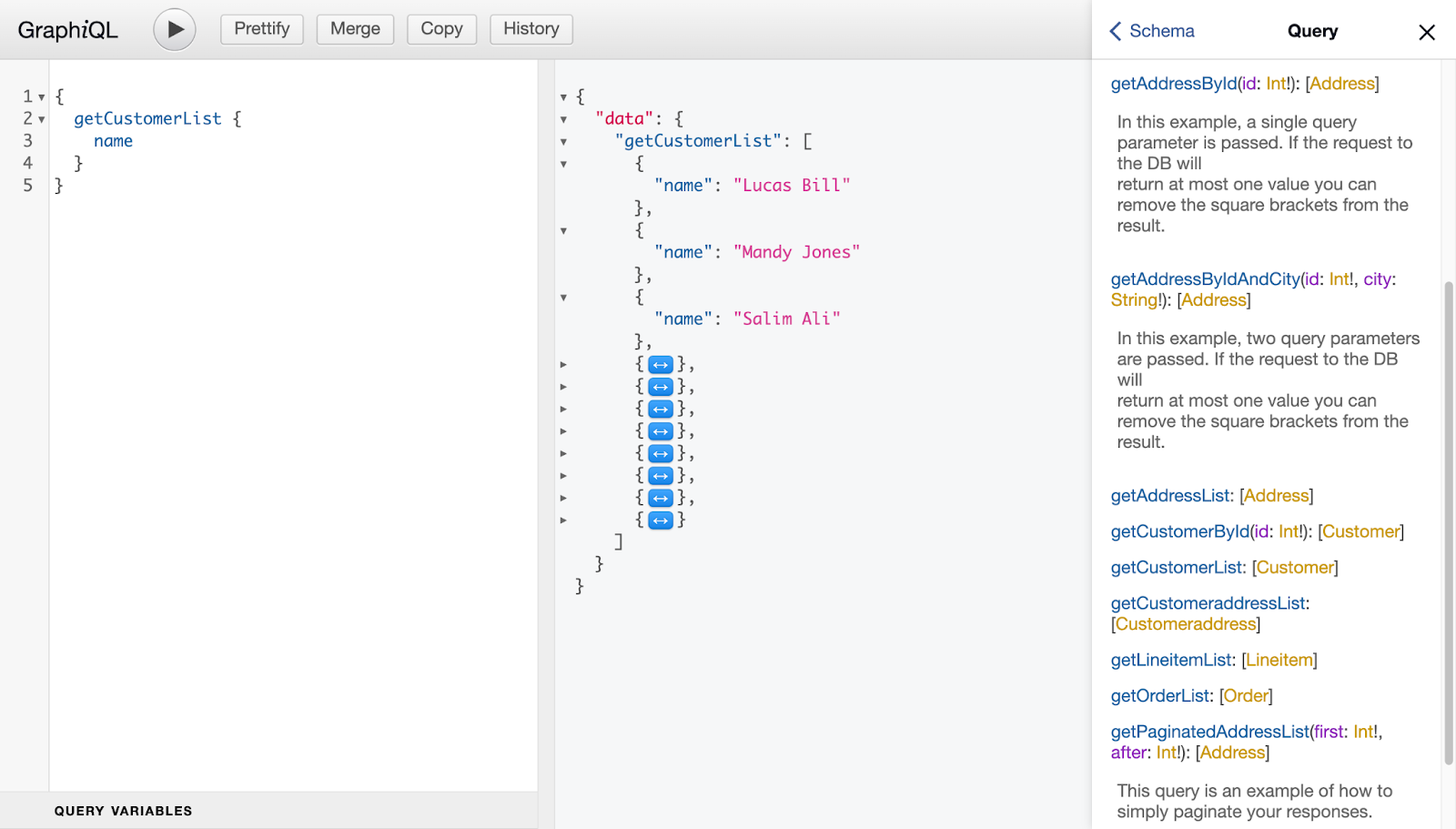

To send a request to the GraphQL API, you must first construct a GraphQL query. When you visit the endpoint where the GraphQL API is deployed, you find a GraphiQL interface - an interactive playground to explore the GraphQL API. In this interface, you can find the complete schema, including the queries and possibly other operations the GraphQL API accepts.

In this interface, you can send queries and explore the result and the schema as you see above. To send this same query to the proxied GraphQL API, you can use the following cURL:

curl -i -X POST 'http://localhost:8000/' \

--header 'Host: stepzen.net' \

--header 'Content-Type: application/json' \

--data-raw '{"query":"query getCustomerList {\n getCustomerList {\n name\n }\n}","variables":{}}'

This cURL will return both the response result and the response headers:

HTTP/1.1 200 OK

Content-Type: application/json; charset=utf-8

Content-Length: 445

Connection: keep-alive

X-Cache-Key: 5abfc9adc50491d0a264f1e289f4ab1a

X-Cache-Status: Miss

StepZen-Trace: 410b35b512a622aeffa2c7dd6189cd15

Vary: Origin

Date: Wed, 22 Jun 2022 14:12:23 GMT

Via: kong/2.8.1.0-enterprise-edition

Alt-Svc: h3=":443"; ma=2592000,h3-29=":443"; ma=2592000

X-Kong-Upstream-Latency: 256

X-Kong-Proxy-Latency: 39

{

"data": {

"getCustomerList": [

{

"name": "Lucas Bill"

},

{

"name": "Mandy Jones"

},

…

]

}

}

In the above, the response of the GraphQL API can be seen, which is a list of fake customers with the same data structure as the query we sent to it. Also, the response headers are outputted, and here are two headers that we should focus on: X-Cache-Status and X-Cache-Key. The first header will show if the cache was hit, and the second shows the key of the cached response. To cache the response, Kong will look at the endpoint you send the request to and the contents of the post body - which includes the query and possible query parameters. The GraphQL Proxy Advanced Plugin doesn't cache part of the response if you reuse parts of a GraphQL query in a different request.

On the first request, the value for X-Cache-Status will always be Miss as no cached response is available. However, X-Cache-Key will always have a value as a cache will be created on the request. Thus the value for X-Cache-Key will be the key of the newly created cached response or the existing cache response from a previous request.

Let's make this request again and focus on the values of these two response headers:

X-Cache-Status: Hit

X-Cache-Key: 5abfc9adc50491d0a264f1e289f4ab1a

The second time we hit the proxied GraphQL API endpoint, the cache was hit, and the cache key is still the same value as for the first request. This means the previous request's response has been cached and is now returned. Instead, the cached response was served as the cache status indicated. Unless you change the default TTL of the cache, the cached response stays available for 300 seconds. If you send this same request to the proxied GraphQL API after the TTL expires, the cache status will be Miss.

You can also inspect the cached response directly by sending a request to the GraphQL Proxy Advanced Plugin directly. To get the cached response from the previous request, you can use the following endpoint on the Admin API of your Kong instance. For example:

curl http://localhost:8001/graphql-proxy-cache-advanced/5abfc9adc50491d0a264f1e289f4ab1a

This request returns HTTP 200 OK if the cached value exists and HTTP 404 Not Found if it doesn't. Using the Kong Admin API, you can delete a cached response by its key or even purge all cached responses. See the Delete cached entity section in the GraphQL Proxy Cache Advanced documentation for more information on this subject.

Conclusion

This post taught you how to add a GraphQL API to Kong to create a proxied GraphQL endpoint. By adding it to Kong, you don't only get all the features that Kong offers as a proxy service, but you can also implement caching for the GraphQL API. You can do this by installing the GraphQL Proxy Cache Advanced, which is available for Plus and Enterprise instances. With this plugin, you can implement proxy caching for GraphQL APIs, letting you cache the response of any GraphQL request.

Follow us on Twitter or join our Discord community to stay updated about our latest developments.